Technology & Privacy

Maryland Becomes First State to Ban 'Surveillance Pricing' on Some Food Products

April 30, 2026 | Katherine Tschopp

April 30, 2026 | Max Rieper

Key Takeaways:

Online children's safety has become one of the most active areas of state legislation this year, with lawmakers introducing nearly 300 bills on the issue. Lawmakers are targeting how companies collect children's data and expose them to harmful material, predatory behavior, cyberbullying, and algorithmically driven addiction. Recent jury verdicts — including a California case finding that Meta and Google deliberately designed addictive features, and a New Mexico case finding that Meta violated consumer protection laws for failing to protect minors — have only added momentum. The legislative push spans a wide range of approaches, but the overarching trend is clear: the scope of what falls under "protecting children online" keeps expanding.

The first wave of state online child safety legislation targeted adult websites, and the approach has spread rapidly. Earlier this month, West Virginia enacted a bill (WV HB 4412) to become the 26th state to require age verification to access sites with sexual content. These laws typically apply to sites that have a "substantial portion" (usually one-third) of content that is "materially harmful" to minors. States typically define what is "materially harmful" along the lines of the three-pronged test laid out by the U.S. Supreme Court: (1) that the material appeals to prurient interests, (2) according to the average person applying community standards, and (3) lacks serious literary, artistic, political, or scientific value for minors.

The second and currently most active front is social media, where lawmakers are pursuing two distinct regulatory strategies: restricting who can access platforms and dictating how those platforms are designed.

Some proposals set hard age limits, such as a Florida law passed in 2024 (FL HB 3), prohibiting anyone under 14 from having an account, while others require parental consent for minors of a certain age to join a platform or receive personalized algorithmic feeds.

Other bills go further, targeting the features that keep young users engaged. Proposals would restrict auto-play, infinite scroll, and push notifications during school hours or at night. Lawmakers have also proposed requiring platforms to limit account visibility for minors, prohibit contact from strangers, restrict the sharing of precise location data, and filter out content promoting violence, sexual material, or eating disorders. These design-side mandates represent a notable shift. Rather than simply gatekeeping access, lawmakers are attempting to reshape how platforms function.

Some lawmakers are also looking beyond the platforms themselves to the gatekeepers of the digital ecosystem. Alabama (AL HB 161) and Utah (UT HB 498) each passed bills this year requiring app stores to verify the age of users and send an age category signal to app developers to enforce restrictions. Under these laws, app stores must also obtain verifiable parental consent before a minor can download or purchase an app or make in-app purchases. A similar law enacted in Texas in 2024 (TX SB 2420) has been blocked by courts on constitutional grounds.

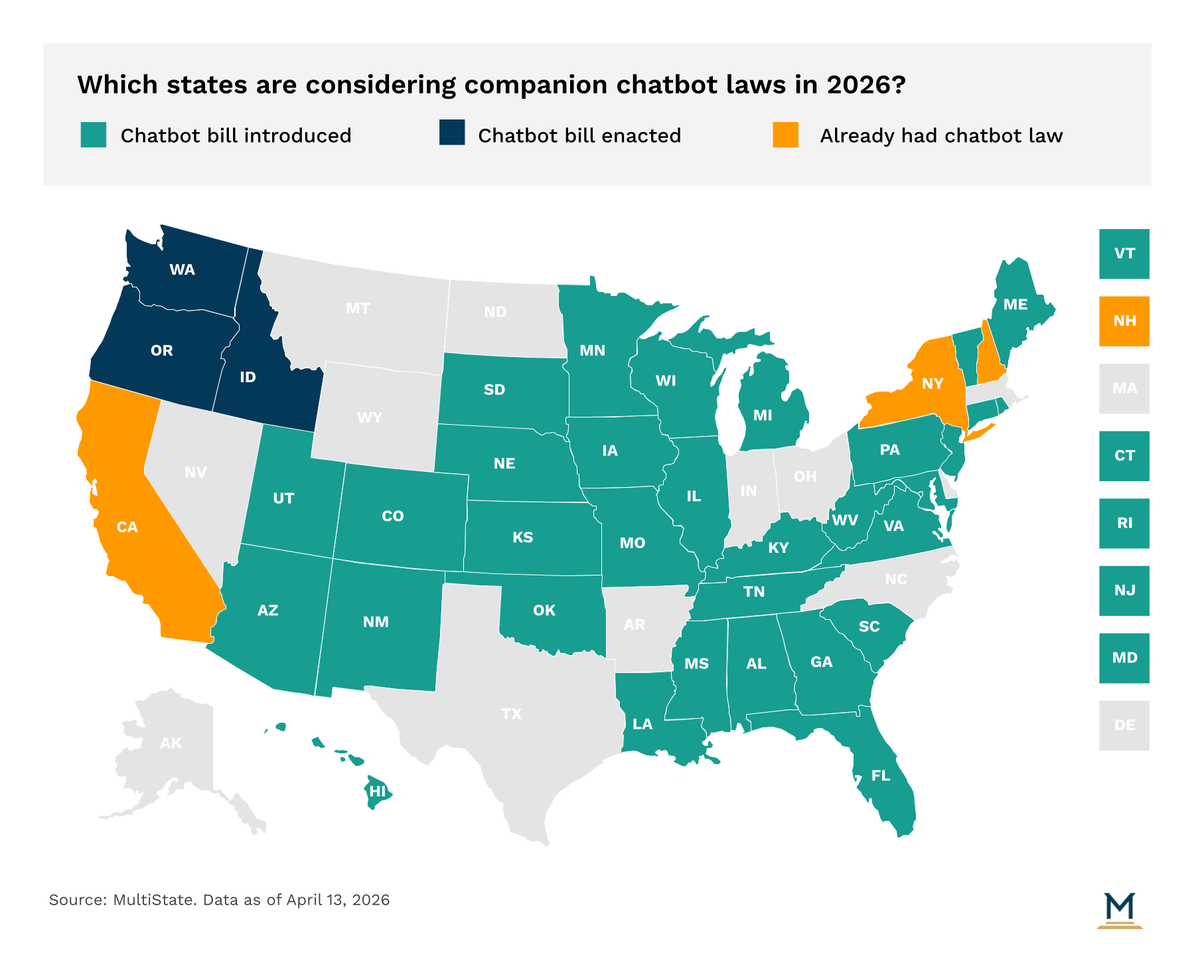

The newest frontier, and perhaps the clearest illustration of how far the "child safety" frame has expanded, involves AI-powered companion chatbots. These platforms, designed to simulate personal relationships or friendships, have recently drawn lawsuits alleging that operators failed to protect minors from harmful and sexually explicit conversations or mishandled users expressing suicidal ideation. In response, state lawmakers have introduced bills ranging from outright bans on minors accessing companion chatbots to requirements that operators prevent chatbots from claiming sentience, initiating sexual conversations, or engaging in manipulative behavior. Many proposals also require periodic reminders that the user is not interacting with a human and protocols for responding when a user expresses ideas of self-harm. Idaho (ID SB 1297), Oregon (OR SB 1546), and Washington (WA HB 2255) have already enacted bills this session regulating how minors can use companion chatbots, and bills may be on the verge of being enacted in Maine (ME LD 2162) and Nebraska (NE LB 525).

Across all areas, age verification presents significant technical challenges.

Most systems rely on document-based verification, such as uploading a government-issued ID, or data-based methods that attempt to match a user against third-party databases. Both approaches raise concerns about accuracy, feasibility, data security, and the risk of breaches involving sensitive personal information.

Age verification and other laws intended to protect children online also face significant constitutional hurdles. Courts have increasingly scrutinized age-verification requirements under the First Amendment, particularly where they burden adults' access to lawful content. Free speech advocates argue that courts should apply strict scrutiny, requiring narrowly tailored policy solutions. But the U.S. Supreme Court applied the lower standard of rational basis review in a case challenging a Texas age verification law passed in 2023 (TX HB 1181) to limit access to adult websites, allowing the law to stand. Lower courts, however, continue to apply the stricter scrutiny standard to social media laws.

Potential federal preemption hangs over all of these efforts. Congress has debated a proposed Kids Online Safety Act for several years, and recent lawsuits could renew momentum. But until federal legislation materializes, states will continue building a patchwork of overlapping and sometimes conflicting requirements that tech companies will have to navigate jurisdiction by jurisdiction.

The broader trend, though, may matter more than any individual bill. What began as a narrowly targeted effort to keep minors from accessing adult websites has expanded into a sweeping regulatory movement that now touches social media design, app store operations, and AI-powered chatbots. Each new technology that captures legislative attention gets folded into the same "child safety" frame, and the political dynamics make it difficult for any lawmaker to oppose a bill carrying that label. This poses a challenge for stakeholders operating in the digital space who need to grapple with this evolving frame of protecting children online.

MultiState’s team is actively identifying and tracking technology and privacy issues so that businesses and organizations have the information they need to navigate and effectively engage. If your organization would like to further track these or other related issues, please contact us.

April 30, 2026 | Katherine Tschopp

April 9, 2026 | Izzy Aaron

April 8, 2026 | Abbie Telgenhof